Sources: Neil Rhodes CS 152 NN—16 GANs: Mode Collapse, Understanding GANs.

Related to GANs.

As a introduction, if you had a GAN, then you know it plays a maximin game

- the Generator takes random noise and outputs a fake sample . The goal is to produce fakes that look real.

- The Discriminator takes a sample and outputs the probability that is real . The goal is to distinguish real data from fake data.

- They are trained simultaneously and in opposition. It is called adversarial training.

- G minimizes: Makes , fooling the discriminator

- D maximizes: Correctly identifying real () and fake ()

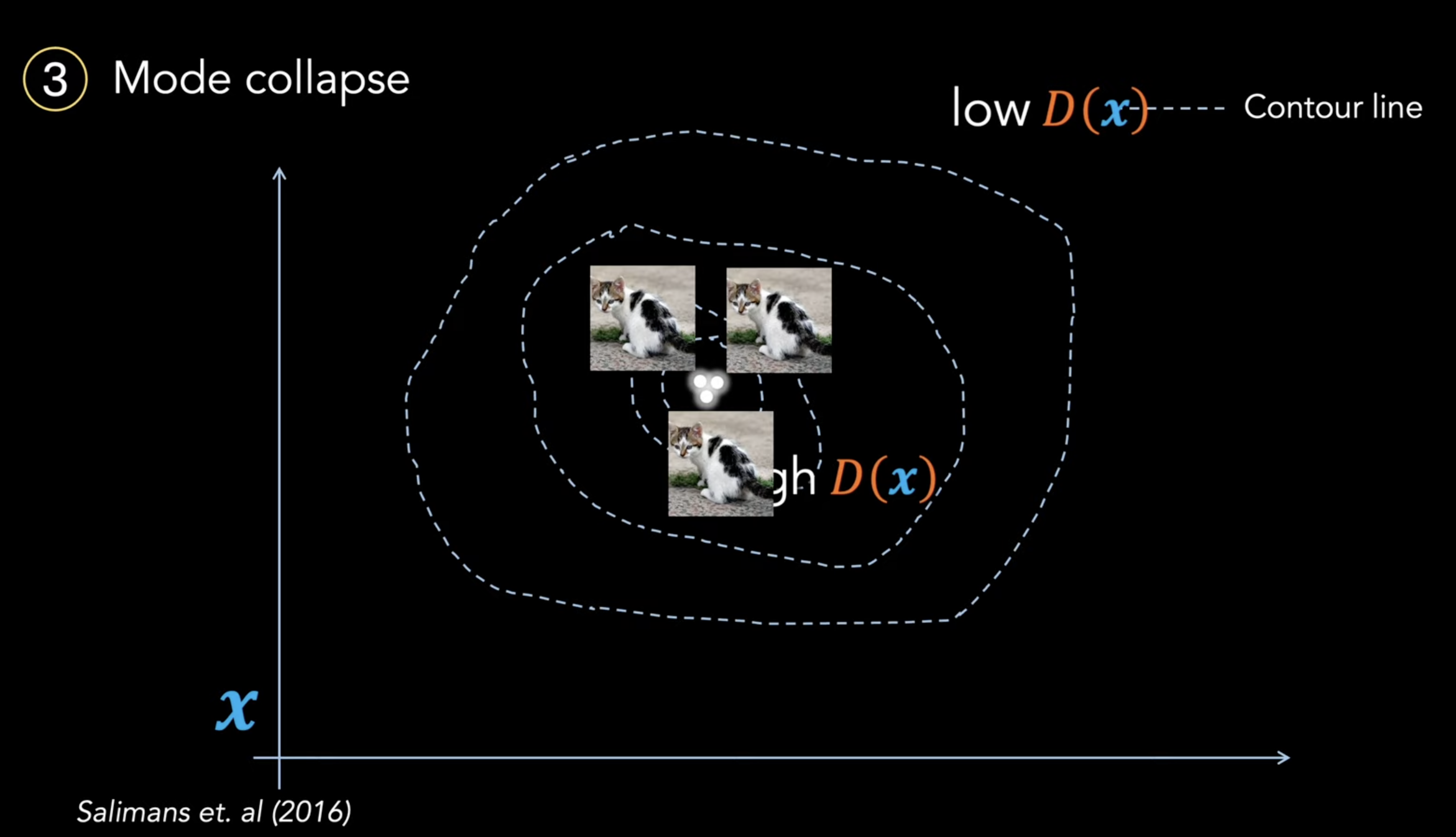

Mode Collapse

- Given Generator(G) and wide range of data z

- The Generator seems to only output one class or close to one class.

- So, it's expressivity is rather limited. It chose to map every noise vector to the same point in data space.

- The training process allows the mode collapse to occur.

How to address this?

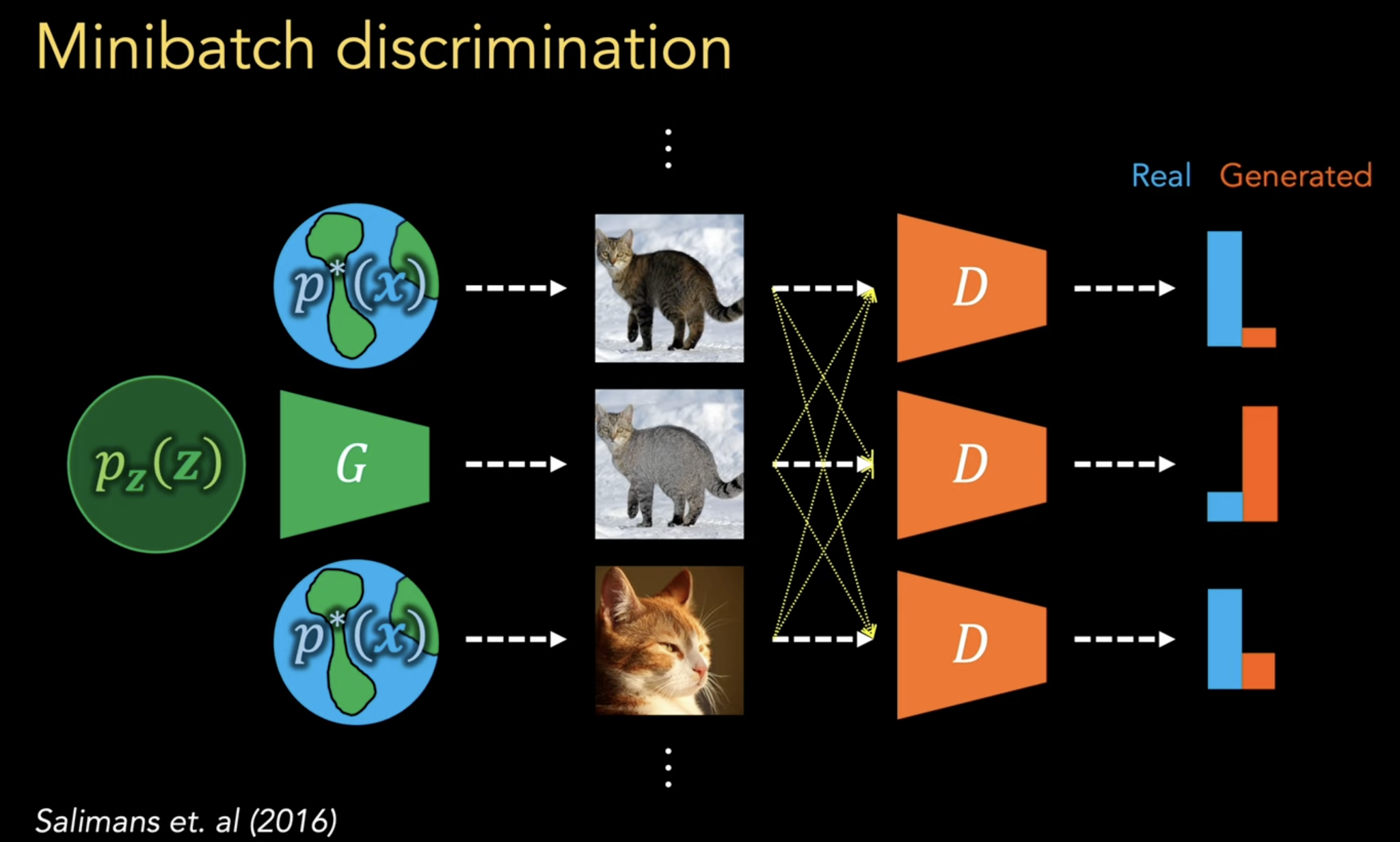

One technique is called Minibatch Discrimination. Basically, it gives the discriminator information about every sample in the batch as it evaluates each individual sample. This way, the discriminator can learn to detect that points are being generated when they all happen to be very close to one another.

Another solution is Wasserstein GANs(paper link).