Information from UTwente, part of my RPCN course. Most of these concepts are covered in the IPCV notes.

Triangulation

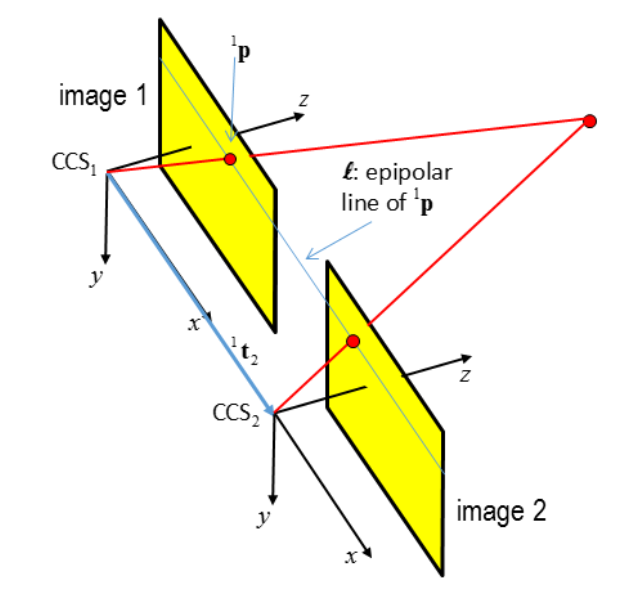

We consider 2 cameras:

- with known intrinsics and each measured point defines a ray along which the object has been seen

- we know the orientation of the second camera relative to the first one.

- we observe the same point from both POVs. The line between the two projection centers is called the Baseline B.

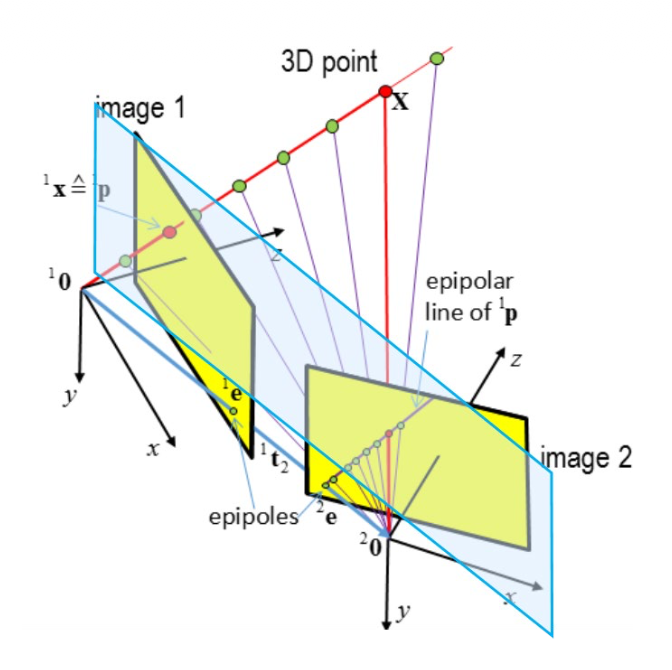

Stereo rectification is the process where two images taken from different perspectives are mathematically warped onto a single common plane so that their image rows align.

- In a “standard” stereo setup, two cameras are placed perfectly side-by-side. Their optical axes are parallel, and their horizontal axes (x-axes) are aligned. Because of this perfect alignment, a point in the real world will appear at the exact same row (y-coordinate) in both images.

- But in reality, you won’t find this; almost never (with some small exception like room mapping). Once we know how the cameras are oriented relative to each other, we mathematically project both images onto a common virtual plane (the blue plane in the diagram). This virtual plane is parallel to the baseline. After this projection, the “tilted” images are transformed into “normal” images.

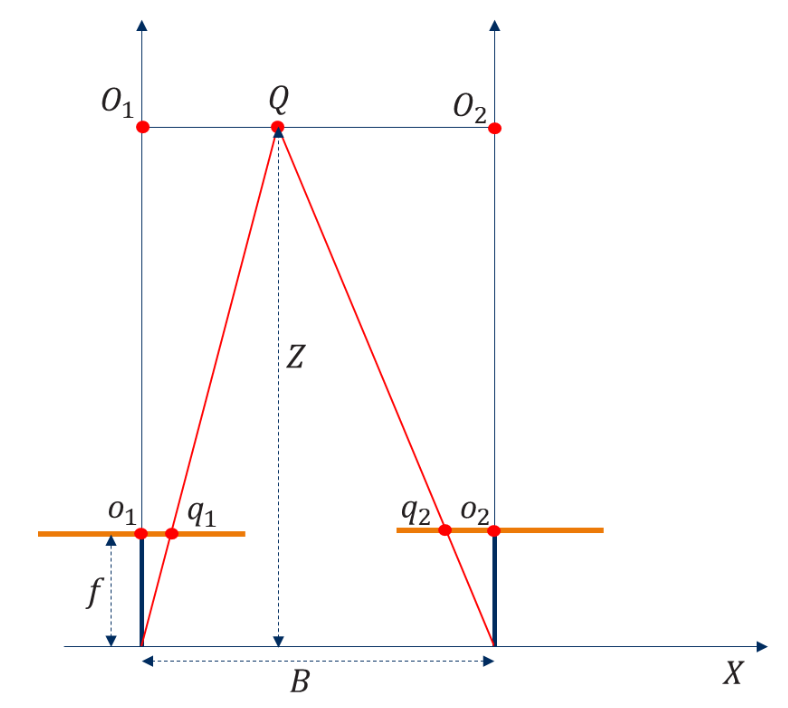

Disparity

Assuming a pair of stereo rectified images, disparity D is the difference between the coordinates of two corresponding points in the camera coordinate system (2D).

The depth Z increases with a decreasing disparity. The disparity becomes zero for points that are infinitely far away.

Depth Accuracy

The accuracy of the estimated depth depends on the accuracy of the disparity measurement. How? We can look at the partial derivative

The above equation shows the effect of a small change in the disparity on the estimated depth. This relation also applies to the propagation of noise in the disparity. If the standard deviation of the disparity measurement is , the standard deviation of the depth estimate will be

Exercises

Task 1: guess the depth accuracy obtainable with an Arducam stereo camera, assuming a disparity accuracy of 0.2 pixels, baseline B of 0.1m and object distance Z of 0.5m.

Camera specs: Pixel size: 1.55 x 1.55 , Focal length: 6mm.

Step 1: Calculate focal length in pixels

Step2: Apply the formula from above

The depth accuracy with these specific camera specs is approximately 0.13mm at a distance of 0.5m.

Task 2: Assume a disparity accuracy of 0.2 pixel. Estimate distance to a distant car where the Baseline B is 0.54m and object distance is 50m. Camera specs remain the same.

Step 1: Same as before — calculating the .

Step 2: we use exactly the same formula

So, even though we increased the Baseline, the massive increase in object distance causes the depth accuracy to drop from sub-millimeter precision to nearly 24 cm.

Task 3: Consider the depth accuracy equation above and suppose you can choose between two cameras. Both cameras have the same number of pixels and the same field of view (i.e. opening angle), but the CCD-chip of camera A is twice as large as the CCD-chip of camera B. Hence, camera A has larger pixels. Argue what effect this may have on the accuracy of depth perception.

So they both have the same resolution (no. of pixels), and same FOV. The formula takes the pixel size in consideration when computing . Therefore, if , then . This fact would lead to a higher value of depth accuracy for camera A, which actually means that it would have worse performance.